As a seasoned crypto investor with a decade-long experience in this space, I’ve learned that patience is a virtue when it comes to investing in emerging technologies like tokenization. The hype surrounding the potential of this technology to transform financial markets and revolutionize the way we invest is undeniable. However, McKinsey’s cautious stance serves as a much-needed reality check.

Nearly a decade passed before Bitcoin reached the milestone of $10,000 for the initial time, while it took around 13 years to surpass $50,000. According to McKinsey’s perspective, showing similar persistence is essential when dealing with tokenization.

Real-world asset tokenization has generated significant buzz, with many believing it could bring about transformative changes in our current world.

According to a report published by 21.co last October, the market size is projected to reach an astonishing $10 trillion by 2030, with a more conservative estimate putting it at $3.5 trillion even under unfavorable conditions.

This involves a great deal of exploration and financial backing, as tokenization platforms amass large sums of money through fundraising to broaden their scope.

But according to McKinsey, a sobering reality check is needed.

As a seasoned analyst, I concur with my team at the consulting firm that this emerging technology holds immense potential to revolutionize the financial sector, enhance the investing experience, and significantly reduce trading costs. However, I also caution that the tokenization industry may be advancing too swiftly, potentially outpacing its ability to effectively manage the complexities and challenges inherent in such a transformative shift.

“There have been many false starts and challenges thus far.”

McKinsey

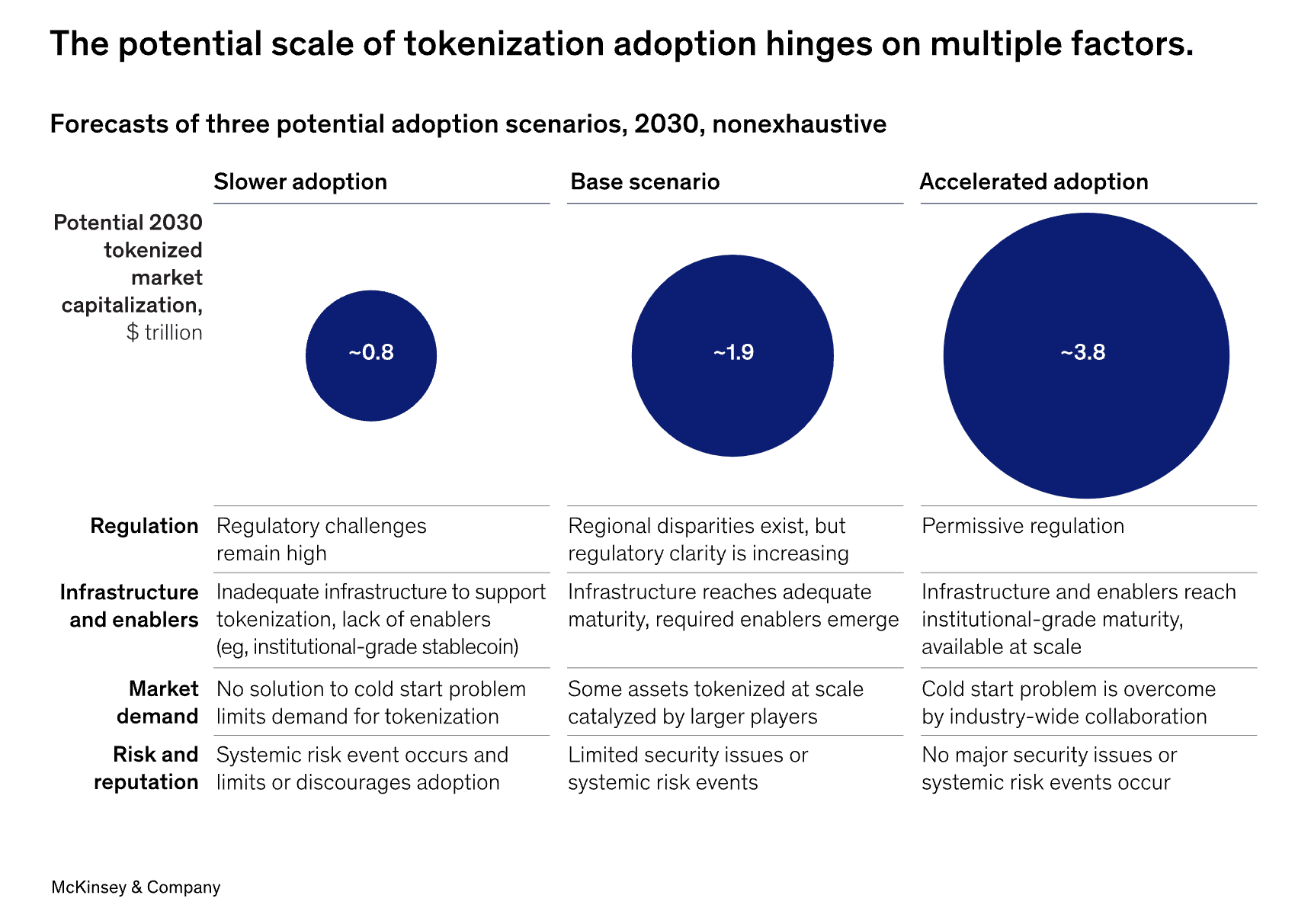

Challenging the optimistic views of astute observers who assume that all stocks and funds will be tokenized imminently, McKinsey raised doubts about the extent of progress this emerging field can make in the next six years, which is the remaining time until the end of the decade.

According to our examination, it’s likely that the total market capitalization of tokenized assets will amount to roughly $2 trillion by the year 2030. Under favorable conditions, this figure could potentially surge up to approximately $4 trillion. However, we hold a more conservative view than earlier forecasts.

McKinsey

As a analyst, I’d put it this way: The authors paint a grim picture in which the tokenization market could be valued at a mere $1 trillion in the most unfavorable circumstances. This is below the current market capitalization of Bitcoin.

As an analyst, I would rephrase it as follows: I’m not implying that McKinsey views this technology as a passing trend; instead, it’s a recognition that widespread adoption takes time. The journey to $10,000 for Bitcoin took nearly nine years, and reaching $50,000 required just over twelve.

In discussing the obstacles that may hinder the broader adoption of tokenization and the achievement of a “network effect,” McKinsey identifies several challenges that early adopters are likely to encounter.

- Limited liquidity, with disappointing transaction volumes failing to deliver a robust market

- Parallel issuance on old-fashioned platforms leading to greater expense

- Long-established processes being disrupted

Due to the various complexities involved, tokenization needs to overcome an extra hurdle to fully realize its capabilities: demonstrating its superiority over existing methods. As an illustration, the paper points out that for securitized instruments like bonds, this entails:

As a crypto investor, I recognize the challenge of the “cold start” problem and believe that creating a compelling use case is crucial for overcoming it. By designing scenarios where the digital representation of collateral offers significant advantages, we can entice adoption. These benefits might include:

McKinsey

Three other potential hurdles are also worthy of discussion.

According to McKinsey’s assessment, modernizing the outdated infrastructure used by the financial services industry to handle massive monetary transactions, some of which have been in place for several decades, is a complex process that calls for diligence and careful planning. Uniformity will also be essential in this endeavor.

As a researcher studying this topic, I acknowledge that regulatory bodies hold significant influence over the direction of the digital asset market. However, it’s important to note that their response time to emerging technologies may not be as swift as some might hope.

It’s important to have a profound exploration of whether blockchains are capable enough to address the current challenges. Scalability has been a significant concern for large-scale networks, but Layer 2 solutions have begun to offer promising relief. Blockchains, however, can be fragmented into separate entities that cannot interact with each other. Bridges have been proposed as potential remedies, but they come with security risks and have experienced high-profile, costly hacks in the past.

McKinsey’s main point is that tokenization is an unavoidable trend, offering substantial advantages such as around-the-clock business transactions and more equitable investment conditions. Yet, it’s essential to remember that building something as complex as a modern financial system takes time.

“The expansion rate of consumer techs like the internet, smartphones, and social media, as well as financial innovations such as credit cards and Exchange-Traded Funds (ETFs), is usually the fastest – over 100% per year – during their initial five years in the market.”

McKinsey

Early adopters stand to reap significant benefits if they establish successful tokenization platforms and secure market leadership. However, there’s a risk they may be surpassed by newcomers boasting advanced technology, resulting in substantial investments being wasted.

As a crypto investor, I’d advise setting a reminder for the year 2030 regarding the predicted total value of the tokenization market. Yet, it seems that 21.co and McKinsey have contrasting views on this matter. While I respect their expertise, I cannot help but wonder if both estimations might be off the mark.

Read More

- 10 Most Anticipated Anime of 2025

- USD CNY PREDICTION

- Pi Network (PI) Price Prediction for 2025

- Silver Rate Forecast

- Gold Rate Forecast

- USD MXN PREDICTION

- Brent Oil Forecast

- USD JPY PREDICTION

- EUR CNY PREDICTION

- Ash Echoes tier list and a reroll guide

2024-07-01 16:16